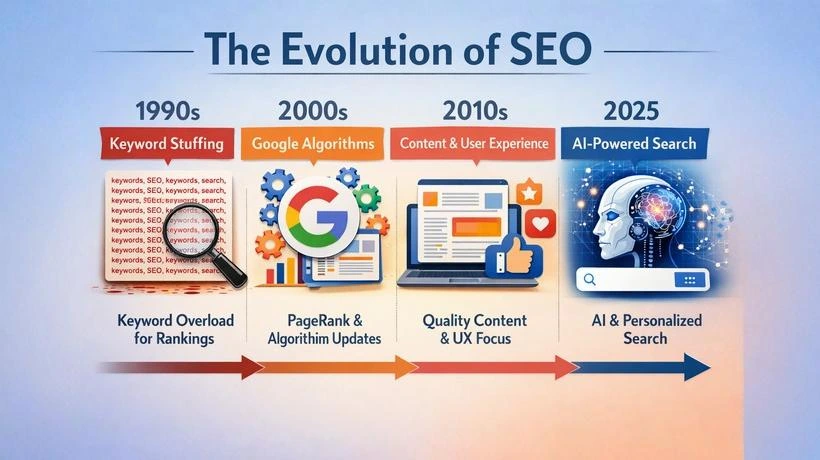

Search engine optimisation (SEO) has changed beyond recognition since the first websites appeared in the mid-1990s. What began as a crude exercise in keyword repetition and meta tag manipulation has transformed into a sophisticated, multi-disciplinary field encompassing content strategy, natural language processing, user experience design, and now artificial intelligence. Understanding how SEO evolved is not just a history lesson — it is the only reliable guide to where it is going next.

This article traces the full arc of SEO development, from the Wild West days of the early web through every major Google algorithm shift, to the emerging disciplines of Answer Engine Optimisation (AEO) and Generative Engine Optimisation (GEO) that define search strategy in 2025 and beyond.

Era 1: The Wild West of Early Search (1991–1997)

1991–1997The World Wide Web was publicly opened in 1991, and within a few years the volume of pages was already too large for human editors to catalogue manually. The first search engines — Archie, Gopher, Veronica, and then commercial players like Yahoo! Directory (1994), AltaVista (1995), and Excite — emerged to solve this problem.

These early systems relied heavily on meta keywords and basic on-page signals to determine relevance. A webmaster who placed a target keyword in the page title, the meta keywords tag, and the first paragraph of text stood a strong chance of ranking for that term. The algorithms were simple enough that gaming them was trivially easy — and the SEO community quickly figured this out.

Keyword stuffing became widespread almost immediately. Webmasters discovered they could stuff dozens of repetitions of a keyword into white text on a white background — invisible to readers but readable by crawlers. Hidden divs, invisible pixel fonts, and comment-block stuffing were all standard practice. There was no concept of "quality" in early algorithmic ranking; only keyword frequency and page structure.

The term "search engine optimisation" itself entered common usage around 1997, when practitioners began formally documenting these techniques. Early SEO was entirely technical and required no content expertise whatsoever — the content on a page was almost irrelevant to its ranking.

Era 2: Google Arrives and Changes Everything (1998–2003)

1998–2003In September 1998, Larry Page and Sergey Brin launched Google from a Stanford University server room. The search engine was built on a fundamentally different premise from its competitors: instead of ranking pages primarily by on-page keyword signals, Google's PageRank algorithm used the structure of the web itself as a relevance signal.

PageRank treated hyperlinks as votes of confidence. A link from Page A to Page B was interpreted as Page A endorsing Page B. More links from more authoritative pages meant higher rankings. This was a revolutionary shift: for the first time, the broader web community — not just the webmaster — influenced a page's rank.

Google's search quality was demonstrably superior to competitors from launch. It indexed more pages, returned more relevant results, and was far harder to spam with on-page manipulation alone. Within a few years it had displaced AltaVista, Excite, and Lycos to become the dominant global search engine — a position it has never relinquished.

For SEO practitioners, the PageRank era introduced an entirely new discipline: link building. Acquiring backlinks from external websites became the most powerful lever for improving rankings. This era also saw the rise of early SEO agencies, forums like WebmasterWorld, and the commercialisation of search optimisation as a professional service.

Era 3: The Link Building Gold Rush (2004–2010)

2004–2010If the PageRank era established that links were currency, the mid-2000s turned link acquisition into an industrial operation. The period from roughly 2004 to 2010 was characterised by aggressive, frequently manipulative link building schemes that exploited Google's heavy reliance on backlink quantity as a ranking signal.

Common tactics of this era included link farms (networks of low-quality sites linking to each other), paid link schemes (purchasing links from high-authority pages), article spinning (generating hundreds of near-duplicate articles with embedded links for distribution across directories), and reciprocal link exchanges. Private Blog Networks (PBNs) — collections of expired domains with retained authority, built solely to manufacture links — became a sophisticated and profitable black-hat industry.

On-page SEO in this period was only marginally more nuanced than in the 1990s. Keyword density was still a guiding metric; titles and headings were stuffed with exact-match phrases. Content was produced cheaply and at scale — quality was secondary to volume and keyword saturation. A page that ranked rarely needed to satisfy the user; it simply needed to rank.

Google responded throughout this period with periodic algorithm updates, but the most significant changes were still to come. The fundamental architecture of PageRank, unchanged since 1998, created incentive structures that were inherently gameable — and the SEO industry had become extremely skilled at gaming them.

Era 4: The Quality Content Revolution (2011–2013)

2011–20132011 marks the most consequential year in SEO history. In February of that year, Google launched the Panda algorithm update — a sweeping, site-wide quality assessment that demoted entire domains for hosting thin, low-quality, or duplicate content, regardless of their link profiles.

Panda used machine learning to identify content that failed to genuinely help users. It targeted content farms (sites like Demand Media's eHow, which produced thousands of shallow articles on trending search queries), websites with high ad-to-content ratios, and pages with thin or boilerplate text. Overnight, sites that had ranked comfortably for years lost 40%, 60%, even 80% of their organic traffic. Demand Media lost an estimated $300 million in market value within weeks of the update.

Fourteen months later, in April 2012, Google launched Penguin — targeting the link building manipulation that Panda had not addressed. Penguin penalised sites with unnatural link profiles: links from irrelevant low-quality domains, links with keyword-rich anchor text that looked commercially motivated, and links from known link farms and paid networks. Years of link building work became an active liability overnight for thousands of websites.

Together, Panda and Penguin represented a fundamental restructuring of SEO incentives. The message was unmistakable: content quality and natural link acquisition were now the only sustainable strategies. The age of manipulation was over — at least in its most brazen forms.

❌ Pre-Panda/Penguin SEO (Before 2011)

- High keyword density targets

- Mass article spinning and directory submission

- Paid link schemes

- Private Blog Networks (PBNs)

- Thin content at scale

- Content farms

✅ Post-Panda/Penguin SEO (After 2012)

- Content depth and user value

- Editorial link acquisition

- Natural anchor text diversity

- Site-wide quality signals

- Comprehensive, original research

- Digital PR and brand mentions

Era 5: Semantic Search and Understanding Intent (2013–2015)

2013–2015Having addressed content quality and link manipulation, Google's next challenge was a more fundamental one: understanding what users actually meant by their queries, not just which words they typed. The solution was Hummingbird, launched quietly in August 2013 and publicly confirmed in September.

Hummingbird was not an update to Google's existing algorithm — it was a replacement of the core algorithm itself. Where previous systems treated a query as a collection of keywords to be matched, Hummingbird treated the entire query as a sentence with meaning. It could understand that "how do I get rid of a cold quickly" and "fast cold remedies" expressed the same intent, even though they shared no keywords. It could understand context, modifiers, and conversational phrasing.

Hummingbird also marked Google's first serious step toward answering questions directly rather than simply listing pages. Featured snippets — boxes at the top of search results showing a direct answer extracted from a web page — began appearing at scale from 2014 onwards. This was the earliest visible manifestation of what would eventually become AI Overviews.

For SEO practitioners, Hummingbird necessitated a new way of thinking about keyword research. Optimising for a single exact-match keyword phrase became less relevant; satisfying the underlying user intent behind a cluster of related queries became the goal. The concept of topic authority — being the most comprehensive, trustworthy source on a given subject — began to replace keyword targeting as the central strategic objective.

Era 6: Mobile, Local Search, and User Experience (2015–2017)

2015–2017By 2015, mobile devices had overtaken desktop computers as the primary means of accessing the internet in most countries. Google's response was decisive and swift.

In April 2015 — an update the industry immediately nicknamed "Mobilegeddon" — Google began using mobile-friendliness as a ranking signal for mobile search results. Sites without responsive design or a dedicated mobile version dropped significantly in mobile rankings. Given that mobile traffic already accounted for the majority of searches in many categories, this was not a minor adjustment — it was a sea change.

In 2016, Google announced its transition to mobile-first indexing: instead of crawling and indexing the desktop version of a site as the primary source, Google would use the mobile version. This was fully rolled out by 2019. The implication was stark: if your mobile site had less content, fewer images, or a weaker structure than your desktop site, your rankings would suffer even for desktop users.

This era also saw local SEO mature into its own sub-discipline. Google's "Pigeon" update (2014) and "Possum" update (2016) dramatically improved the local search algorithm, tying local results more tightly to a user's physical location and the authority of the Google Business Profile (then called Google My Business). For businesses with physical locations, ranking in the local "3-pack" became as valuable — often more valuable — than ranking on page one of organic results.

Page speed, long a theoretical concern, became a formal mobile ranking signal with the Speed Update in July 2018. Google's data showing that pages taking longer than three seconds to load lost more than half their mobile visitors created urgent commercial pressure to invest in performance engineering.

Era 7: AI and Natural Language Processing Enter Search (2018–2020)

2018–2020The years 2018 to 2020 saw artificial intelligence move from the background of Google's operations to the foreground. Two landmark events defined this period: the Medic Update (August 2018) and the introduction of BERT (October 2019).

The Medic Update was a broad core algorithm update that disproportionately affected health, finance, and legal websites — collectively dubbed YMYL (Your Money or Your Life) categories. Google's quality raters had long assessed content in these categories by the standard of E-A-T (Expertise, Authoritativeness, Trustworthiness), and Medic appeared to algorithmically operationalise this assessment at scale. Websites lacking clear author credentials, medical citations, or institutional backing lost massive traffic; those demonstrating genuine expertise gained.

BERT (Bidirectional Encoder Representations from Transformers) was a pivotal moment in the relationship between AI research and search. BERT is a pre-trained natural language processing model developed by Google Research. Applied to search, it dramatically improved Google's ability to understand prepositions, context, and nuance in queries — particularly long-tail conversational queries where the meaning depends heavily on word order and relational context.

The practical SEO implication of BERT was that writing naturally for human readers — using full sentences, proper grammar, and contextual nuance — became more algorithmically valuable than writing for keyword density. Content that read like a human wrote it for other humans was precisely what BERT was calibrated to reward.

Era 8: E-E-A-T, Helpful Content, and Page Experience (2021–2022)

2021–2022The early 2020s brought two significant additions to Google's quality evaluation framework: the expansion of E-A-T to E-E-A-T (adding a second "E" for Experience), and the Helpful Content Update (August 2022).

The additional "E" for Experience acknowledged something BERT alone could not assess: whether the content creator had personal, first-hand experience with the subject they were writing about. A product review written by someone who had actually used the product was now explicitly valued above one assembled from spec sheets and competitor reviews. This change particularly affected affiliate content and review sites, where much content had long been written without product access.

The Helpful Content Update was perhaps the most philosophically significant update since Panda. It introduced a site-wide classifier: if a substantial proportion of a website's content was judged to have been produced primarily to rank in search results — rather than to genuinely help readers — the entire site could be downranked, not just the offending pages. Google's own description of "helpful content" centred on one core question: "Is this content created for people first, or for search engines first?"

In parallel, Google launched its Page Experience update in June 2021, incorporating Core Web Vitals — Largest Contentful Paint (LCP), Cumulative Layout Shift (CLS), and Interaction to Next Paint (INP) — as ranking signals. This formalised the relationship between site performance and search ranking that had been building since the Speed Update of 2018.

Also in 2021, Google introduced MUM (Multitask Unified Model), a multimodal AI model significantly more powerful than BERT. MUM could process text, images, audio, and video simultaneously, understand 75 languages, and draw on cross-modal information to answer complex queries. While MUM's direct impact on standard search results has been gradual, it underpins Google's ability to answer multi-step, nuanced queries that would have been impossible to handle algorithmically just a few years earlier.

Era 9: The AI Search Revolution (2023–2025)

2023–2025The release of ChatGPT in November 2022 triggered the most disruptive period in the history of search. For the first time in two decades, Google faced a credible existential competitor to its core product — a conversational AI capable of answering complex questions without returning a list of links at all.

Google's response was Search Generative Experience (SGE), launched in beta in May 2023 and renamed AI Overviews at Google I/O 2024. AI Overviews appear above standard search results for many queries, presenting a synthesised, paragraph-length answer generated by Google's AI — with citations to source pages. This represented the most visible structural change to the Google search results page since the introduction of featured snippets a decade earlier.

The implications for SEO were profound and are still being worked out. Click-through rates on organic results below AI Overviews have declined for informational queries, as users receive answers directly from the SERP without clicking through to source pages. However, pages cited within AI Overviews gain significant brand exposure and targeted referral traffic. The optimisation goal has shifted from "rank at position one" to "be the source Google's AI trusts enough to cite."

Simultaneously, AI-native search tools — Perplexity, ChatGPT Search (launched October 2024), Microsoft Copilot (integrating Bing results), and You.com — have collectively drawn substantial traffic away from traditional search. These tools do not present ranked lists of blue links; they generate direct answers with citations. The web traffic implications for publishers are significant and growing.

This new landscape has given rise to two new optimisation disciplines that sit alongside traditional SEO: Answer Engine Optimisation (AEO) and Generative Engine Optimisation (GEO).

Complete Reference: Major Google Algorithm Updates

The following table summarises every significant Google algorithm update from 2011 to 2024, what it targeted, and the SEO response it required.

| Year | Update Name | What It Targeted | SEO Response Required |

|---|---|---|---|

| 2011 | Panda | Thin content, content farms, duplicate content, high ad-to-content ratios | Invest in depth, originality, and editorial quality. Remove or consolidate thin pages. |

| 2012 | Penguin | Manipulative link building: paid links, link farms, over-optimised anchor text | Disavow toxic links. Shift to editorial link acquisition via digital PR. |

| 2012 | Pirate | Copyright infringement and DMCA violations | Remove infringing content. Ensure original content is properly attributed. |

| 2013 | Hummingbird | Core algorithm rewrite focused on query intent and semantic understanding | Optimise for user intent, not individual keywords. Use natural language throughout. |

| 2014 | Pigeon | Local search relevance — tied local results more tightly to geographic signals | Optimise Google Business Profile. Build local citations and location-specific content. |

| 2015 | Mobilegeddon | Non-mobile-friendly pages in mobile search results | Implement responsive design. Pass Google's Mobile-Friendly Test. |

| 2015 | RankBrain | Machine learning component added for query interpretation and result re-ranking | Focus on user satisfaction metrics. Write content that answers the full query context. |

| 2016 | Possum | Local search — diversified results by filtering near-duplicate businesses | Differentiate your business clearly in your Google Business Profile and on-page signals. |

| 2018 | Medic | YMYL (health, finance, legal) content lacking E-A-T signals | Add author credentials, cite sources, build topical authority, acquire relevant backlinks. |

| 2018 | Speed Update | Very slow-loading pages in mobile search | Optimise Core Web Vitals. Compress images, defer JavaScript, enable caching. |

| 2019 | BERT | Core NLP model for understanding query context and nuance | Write naturally for humans. Avoid keyword stuffing. Use full, contextual sentences. |

| 2021 | Page Experience / Core Web Vitals | Poor LCP, CLS, and FID scores as UX quality signals | Pass Core Web Vitals thresholds. Eliminate layout shift. Improve server response times. |

| 2021 | MUM | Multimodal AI for complex, multi-step query understanding | Create comprehensive, multi-format content that addresses topics from multiple angles. |

| 2022 | Helpful Content Update | Content produced primarily for search engines rather than users | Audit and remove or improve content created solely to rank. Demonstrate genuine expertise. |

| 2022 | E-E-A-T expansion | Added "Experience" to quality evaluator guidelines alongside Expertise, Authority, Trust | Demonstrate first-hand experience. Use case studies, personal data, and original research. |

| 2023 | March 2023 Broad Core + Spam | AI-generated content produced at scale to manipulate rankings | Ensure all AI-assisted content is human-edited, accurate, and adds genuine value. |

| 2023 | SGE / AI Overviews (beta) | New SERP format — AI-generated answers above organic results | Optimise for AEO and GEO. Structure content to be citable by AI systems. |

| 2024 | March 2024 Helpful Content Rollout | Lowest-quality, least-helpful content at scale; "Parasite SEO" on authoritative domains | Remove third-party content exploiting domain authority. Audit all pages for genuine user value. |

Answer Engine Optimisation (AEO): Optimising for Direct Answers

Answer Engine Optimisation (AEO) is the practice of structuring content so that search engines — and AI systems — select it as the definitive, direct answer to a specific question. Where traditional SEO aimed to rank a page within a list of results, AEO aims to make a page the answer — the source that appears in a featured snippet, a voice search response, or an AI Overview.

AEO is a response to a fundamental shift in user behaviour. Google's own research has consistently shown that users increasingly expect direct answers, not lists of pages to browse. The rapid growth of voice search (which, by definition, returns a single spoken answer rather than ten blue links) accelerated this trend significantly.

How to implement AEO effectively

The most effective AEO techniques centre on directly answering the specific question a user is likely to ask, in the format most useful for extraction and re-presentation by a search or AI system:

- Use question-format headings (H2 and H3). Structure your content around the precise questions your audience asks. The heading "What is Answer Engine Optimisation?" followed immediately by a concise, self-contained definition is the canonical AEO format. This mirrors the structure of a FAQ — one of the most reliably snippet-captured formats in search.

- Write a concise definition in the first sentence. Featured snippets and AI Overviews typically extract the first 40–60 words after a heading. Lead with the answer; never bury it in context.

- Use structured data markup. FAQ schema (

FAQPage), HowTo schema (HowTo), and Speakable schema explicitly signal to Google which parts of your content are answers to questions. This improves eligibility for rich results and voice search selection. - Create content that answers follow-up questions. Voice and conversational search often involves a sequence of related questions. Pages that comprehensively address an entire topic area — not just a single query — are more likely to be returned across the full conversation arc.

- Optimise for conversational phrasing. Voice queries are significantly longer and more conversational than typed queries. A user searching by voice is far more likely to say "What is the best way to improve my website's page speed?" than to type "improve page speed." Your content should match this natural phrasing.

Generative Engine Optimisation (GEO): Being Cited by AI

Generative Engine Optimisation (GEO) is the emerging discipline of optimising content to be discovered, cited, and accurately represented by large language model-powered search tools — including Google's AI Overviews, ChatGPT Search, Perplexity, Microsoft Copilot, and future AI-native search platforms.

GEO differs from both traditional SEO and AEO in a crucial way: it optimises not just for a single search result, but for how an AI system synthesises information across many sources into a generated response. The question is no longer just "does Google rank my page?" but "does the AI cite my page when answering questions on this topic?"

Why GEO matters now

Large language models are now being used as primary research tools by hundreds of millions of people. When a user asks ChatGPT or Perplexity a research question, they receive a synthesised answer with citations — and the source pages cited receive brand exposure, perceived authority, and direct traffic from users who want to explore further. Being absent from AI-generated answers on your core topics is increasingly a meaningful competitive disadvantage.

Core GEO strategies

- Prioritise information density. AI systems favour content with a high ratio of concrete facts, data, and definitions to filler prose. Every paragraph should deliver a substantive assertion or piece of information. Introductory paragraphs that restate the question without advancing the answer are invisible to LLMs in practice.

- Cite specific data and sources explicitly. Sentences structured as "According to [Organisation], [specific finding]" are the gold standard for AI citation. LLMs are trained to treat cited, attributed claims as higher-quality signals than unattributed assertions. Original research, proprietary data, and exclusive findings dramatically increase citation probability.

- Use clear entity naming. Always use the full, official name of people, organisations, products, technologies, and locations on first reference. Avoid pronouns and abbreviations. LLMs process text statistically — ambiguous entity references reduce the confidence with which a model can associate your content with the correct topic.

- Implement structured data comprehensively. Schema.org markup in JSON-LD format makes your content machine-readable at a structural level beyond plain HTML.

Article,FAQPage,HowTo,Dataset, andOrganizationschemas are particularly valuable for AI parsers. - Structure content for extraction. Use tables for comparative data. Use numbered lists for processes. Use definition-format headings (question → concise answer → elaboration) for conceptual explanations. These formats can be cleanly extracted and synthesised by a generative model without creating ambiguity or truncation errors.

- Build topical authority, not just page authority. AI systems assess trustworthiness at the domain and topic level. A site that comprehensively covers a subject area across many interconnected, high-quality pages is more likely to be treated as an authoritative source than a single excellent page on an otherwise thin domain.

- Maintain factual accuracy and currency. LLMs increasingly use retrieval-augmented generation (RAG) — fetching current web content to supplement their training data. Pages with outdated, contradicted, or factually incorrect information are actively downranked by these retrieval systems. Keep all statistics, facts, and dates current.

What Has Never Changed in 30 Years of SEO

Amid all this transformation, some principles have remained constant across three decades and every major algorithm shift:

- Genuinely useful content wins. Every algorithm update — from Panda in 2011 to the Helpful Content Update in 2022 — has been an attempt to better identify and reward content that genuinely helps people. The best long-term SEO strategy has always been to produce the most helpful, accurate, and comprehensive answer to the questions your audience is asking.

- Trust and authority matter. Whether expressed as PageRank in 1998, E-A-T in 2014, or AI citation probability in 2025, the concept that some sources are more credible than others has been central to every ranking system. Building credibility — through accurate information, authoritative citations, institutional relationships, and a consistent track record — has always paid dividends.

- Technical fundamentals are non-negotiable. Crawlability, indexability, page speed, and mobile compatibility have grown in complexity but not changed in principle. A page that cannot be properly accessed, rendered, and understood by a crawler has never ranked well, regardless of the era.

- User experience drives rankings. From early click-through rate signals to modern Core Web Vitals, search engines have always attempted to reward the pages their users find most satisfying. Designing for the reader — not for the algorithm — has consistently outperformed the reverse.

The Future of SEO: What Comes Next

Predicting the future of SEO has historically been a humbling exercise — few in 2010 anticipated BERT, and fewer still anticipated AI Overviews. However, several directional trends are sufficiently established to project with reasonable confidence.

AI-mediated search will continue to grow. Google, Microsoft, OpenAI, and Perplexity are all investing heavily in AI-first search experiences. The proportion of queries answered directly by AI — without a traditional results page click — will increase. This makes AEO and GEO progressively more important relative to traditional rank-and-click optimisation.

Brand and entity authority will become more central. Knowledge Graph recognition — being a known, verified entity in Google's semantic database — is increasingly a prerequisite for appearing in AI-generated answers. Building a strong, consistent brand presence across authoritative publications, Wikipedia, and structured data will grow in SEO importance.

Multimodal search will expand. With MUM and its successors capable of processing images, audio, and video alongside text, optimising non-textual content for discoverability — through alt text, transcripts, structured data, and metadata — will become standard practice rather than an edge case.

First-party data and original research will be decisive. As AI-generated content continues to flood the web with plausible but derivative text, original data — surveys, studies, proprietary analysis, unique case studies — will become increasingly scarce and therefore increasingly valuable as a citation source for both human readers and AI systems.

The SEO practitioners who will thrive are those who understand that the discipline has always been, at its core, about earning trust: from users, from search engines, and increasingly, from AI systems that synthesise the web's knowledge into direct answers. The methods change; the goal does not.